You pivoted to data centers. Now the data centers are pivoting underneath you. The next GPU generation doubles the power, replaces air with pressurized water, and turns your structural assumptions upside down.

TL;DR for Transitioning GCs

If you’re a general contractor moving into data centers from office or multifamily — or even a conventional data center builder — here’s what you need to know about the next generation of AI facilities in 300 words:

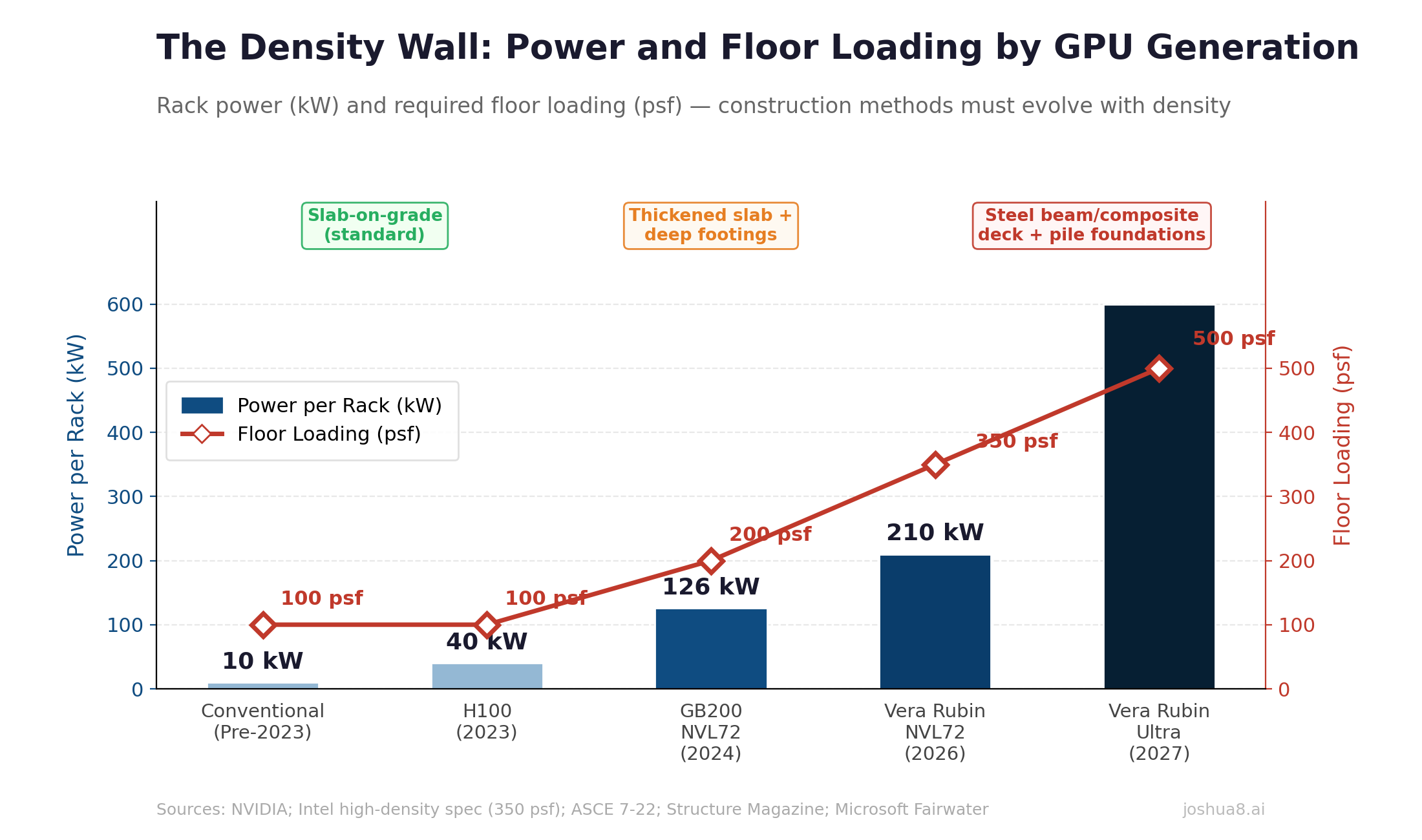

The buildings change completely. NVIDIA’s Vera Rubin GPU (shipping H2 2026) draws 190–230 kW per rack — nearly double the current Blackwell generation. Vera Rubin Ultra (2027) hits 600 kW per rack. Every rack is liquid-cooled. No air conditioning. Pressurized water/glycol piping runs to every cabinet in the building.

Your structural scope gets heavier. Forget the 100 psf data center floor spec you’ve been hearing about. AI-dense liquid-cooled racks with Coolant Distribution Units push floor loads to 250–350 psf — manufacturing plant territory. You’re likely looking at steel beam and composite deck systems or thickened slabs with deep foundations, not the post-tensioned concrete slabs you pour for office towers. Microsoft’s Wisconsin AI facility drove 46.6 miles of deep foundation piles.

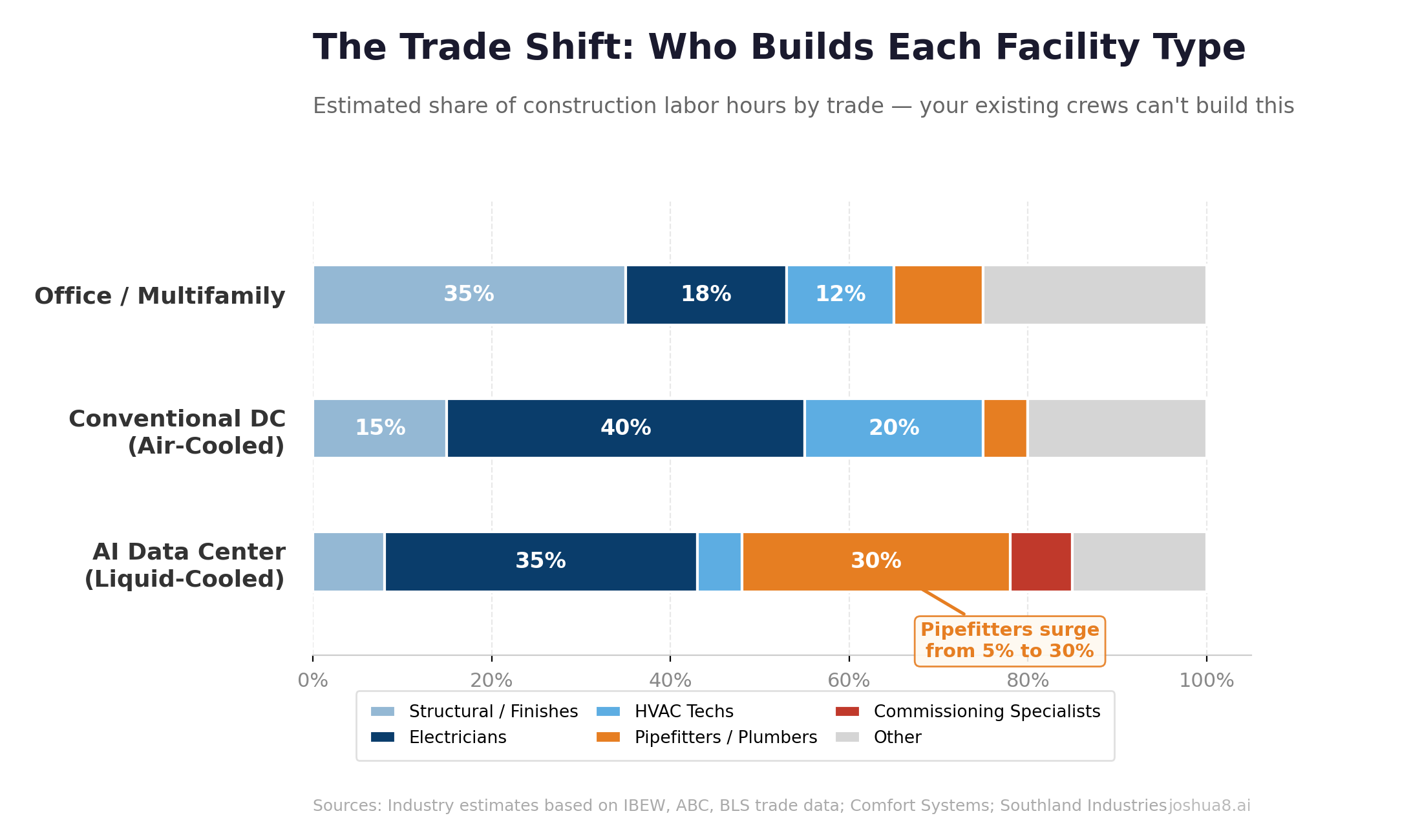

Your mechanical subs change entirely. The HVAC guys who ran CRAC units in conventional data centers can’t build this. You need pipefitters and plumbers — the same trades that build LNG terminals and semiconductor fabs. The U.S. is projected to be short 550,000 plumbers by 2027, and those workers are simultaneously being pulled toward LNG, chip fabs, and now data center liquid cooling.

Water leaks are catastrophic. A single cooling loop failure can destroy millions in GPU hardware. Downtime costs $5,000–$10,000 per minute. Every coupling needs leak detection sensors. Auto-shutoff within 60 seconds is non-negotiable. Your warranty liability is now equipment-focused — a leaked rack of Vera Rubin GPUs is worth more than an entire floor of office finishes.

Your power scope explodes. Forget “utility feed plus diesel backup.” Vera Rubin-era facilities need 138–345kV transmission connections, customer-owned substations, and increasingly on-site generation — gas turbines or fuel cells — as the primary power source. Battery energy storage (BESS) is replacing diesel generators with 4–8 hours of backup. Equipment lead times run 12–18 months. This is power plant construction bolted onto your data center project.

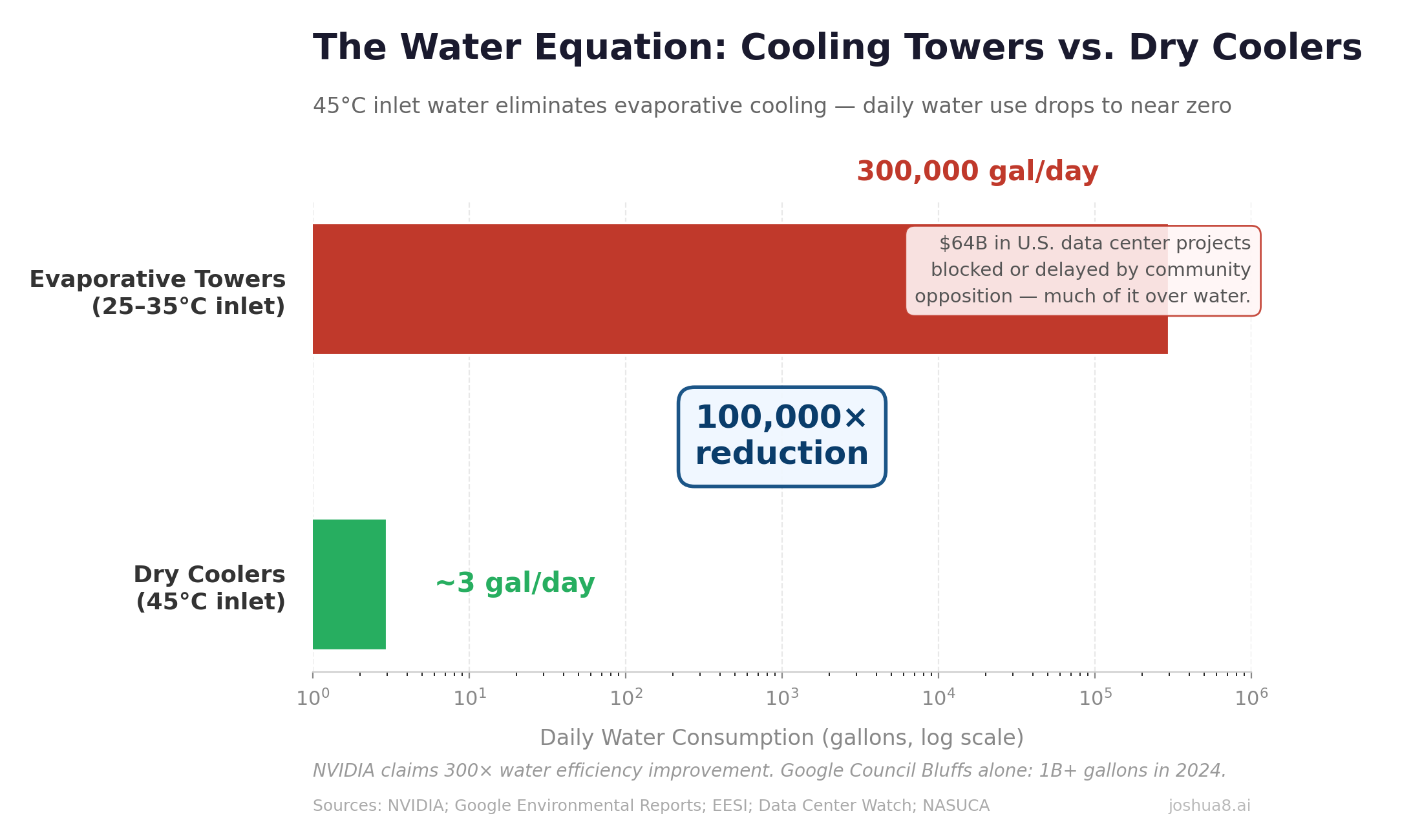

The good news: the 45°C inlet water spec eliminates cooling towers and their massive water consumption, which is the single biggest political obstacle to new data center construction. Dry coolers replace evaporative towers. No water consumed.

Read on for the details.

Why This Matters If You’re a Transitioning GC

If you read the previous post in this series — The Builder’s Pivot — you saw the case for mid-market GCs moving from declining office and multifamily pipelines into the data center construction market. The entry strategies we outlined — powered shell, adjacent infrastructure, JV partnerships, colocation and edge facilities — are sound. But they were written for today’s data center market. What’s coming next changes the game for everyone, including GCs who already build conventional data centers.

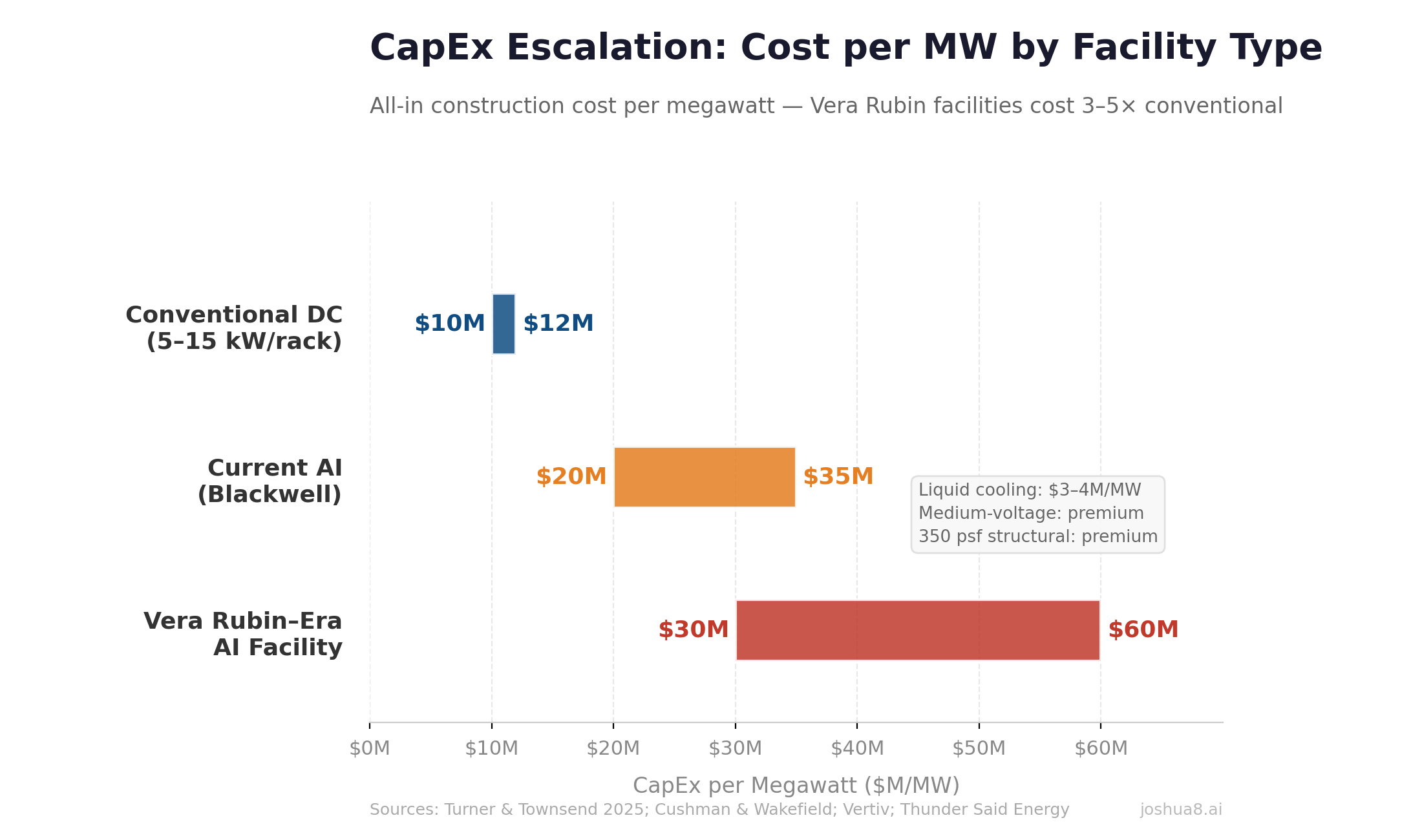

Here’s the uncomfortable truth: a facility designed for conventional colocation (5–15 kW per rack, air-cooled, 100 psf floor loading, HVAC mechanical systems) has almost nothing in common with an AI training facility designed for Vera Rubin (200+ kW per rack, liquid-cooled, 350 psf floor loading, pressurized piping, 800 VDC power). The cost structure, the trades, the structural systems, the risk profile, and the commissioning process are all fundamentally different.

If you’re pivoting from office construction into data centers, you need to understand that you’re not pivoting into one market — you’re pivoting into a market that is itself splitting in two. Conventional colocation and enterprise data centers will continue to exist, but the growth — and the money — is in AI-optimized facilities. And those facilities look more like chemical plants than office buildings.

The Power Density Trajectory

Here is the trajectory driving all of this:

| Generation | Year | Power per Rack |

|---|---|---|

| Conventional (air-cooled) | Pre-2023 | 5–15 kW |

| H100 (air-cooled) | 2023 | ~40 kW |

| GB200 NVL72 (liquid-cooled) | 2024 | 120–132 kW |

| Vera Rubin NVL72 | 2026 | 190–230 kW |

| Vera Rubin Ultra NVL576 | 2027 | 600 kW |

NVIDIA’s Vera Rubin platform, shipping in the second half of 2026, pushes chip-level power draw from Blackwell’s 1,200 watts to 1,800–2,300 watts per GPU. A single Vera Rubin NVL72 rack — 72 GPUs and 36 CPUs in one cabinet — draws 190 to 230 kilowatts. That’s a 40× increase in rack power density from conventional facilities in under five years. And NVIDIA has already announced Vera Rubin Ultra for the second half of 2027, pushing to 600 kilowatts per rack.

A single Vera Rubin Ultra rack will consume more electricity than 400 American homes.

This isn’t speculative. AWS, Microsoft Azure, Google Cloud, and Oracle Cloud are all named early adopters. CoreWeave, Lambda, and Nebius are in the queue. The facilities to house these systems need to be under construction right now.

The Structural Problem: This Is Not a 100 PSF Floor

If you’ve been building offices, you’re used to designing for 50–80 psf live loads. If you’ve been looking at conventional data center specs, you’ve seen the ASCE 7-22 baseline of 100 psf for computer access floors. Neither number is remotely adequate for what’s coming.

Liquid-cooled AI racks are heavy. A fully loaded high-density rack with Coolant Distribution Units, piping manifolds, and coolant weighs substantially more than an air-cooled equivalent. Intel’s specification for high-density deployments calls for 350 psf. Load analysis published in Structure Magazine shows that a typical data hall module — racks, containment, PDUs, piping, maintenance access — can exceed 240 psf even at medium density, and significantly higher for AI-dense configurations.

This changes what you’re building from the ground up.

Slab-on-grade won’t cut it at standard thickness. A conventional 6-inch slab designed for 100 psf doesn’t have the punching shear resistance for concentrated rack loads at 350 psf. You need either significantly thickened slabs (10–12 inches) with deeper footings, or you need to move to a different structural system entirely.

Steel beam and composite deck systems are emerging as the preferred approach for high-density facilities. They offer significantly lower dead load than equivalent concrete systems, better seismic performance, faster erection, and — critically for a market where GPU generations change every 18 months — easier future modification. You can reconfigure a steel-framed data hall without jackhammering post-tensioned tendons.

Post-tensioned concrete, the system many office and multifamily GCs know best, has advantages in multi-story facilities (thinner slabs, more floors in the same height) but is less flexible for the constant reconfiguration that AI facilities require. And PT is a specialty trade — if you’re already struggling to find electrical and mechanical subs, adding post-tensioning contractors to your shortage list doesn’t help.

Deep foundations are becoming standard at hyperscale. Microsoft’s Fairwater AI data center in Wisconsin drove 46.6 miles of deep foundation piles and used 26.5 million pounds of structural steel. That’s not an office building foundation program. That’s industrial infrastructure. Geotechnical modeling and pile design need to happen early in preconstruction — much earlier than most commercial GCs are accustomed to.

For a GC transitioning from office or multifamily, the takeaway is this: the structural scope of a Vera Rubin-era data center looks more like a manufacturing plant or a semiconductor fab than anything in your current portfolio. If your structural engineering relationships are with firms that design parking garages and concrete-frame offices, you need new partners.

The 45°C Revolution: Why Cooling Towers Disappear

At 40 kW per rack, air cooling was already struggling. At 120 kW, it was impossible — Blackwell was liquid-cooled from day one. At 190–230 kW, there is no air-cooling discussion to be had. Every watt of heat must be removed through direct-to-chip liquid cooling loops running through cold plates mounted directly on the GPU dies.

But here’s what makes the Vera Rubin cooling story transformative — and genuinely good news for the industry: NVIDIA designed the platform to operate with 45°C inlet water.

Traditional liquid-cooled data centers run 25–35°C inlet water. At those temperatures, you need mechanical chillers that reject heat through evaporative cooling towers consuming enormous amounts of water. A mid-sized data center with cooling towers uses roughly 300,000 gallons per day. Google’s single facility in Council Bluffs, Iowa consumed over 1 billion gallons in 2024. Over $64 billion in U.S. data center projects have been blocked or delayed by community opposition, much of it driven by water concerns.

At 45°C inlet water, you can reject heat through dry coolers — large air-to-water heat exchangers that transfer heat to ambient air. No evaporation. No water consumption. No cooling towers. The physics work because the cooling loop returns water at 50–55°C, and ambient air temperature is below 45°C in virtually every U.S. market for virtually every hour of the year.

The practical impact: a facility that would have consumed 300,000 gallons per day with cooling towers needs less than 1,000 gallons per year for loop maintenance with dry coolers. NVIDIA claims Vera Rubin delivers 300× more water efficiency than air-cooled systems.

This is the technology that makes data centers politically buildable again. For developers fighting community opposition, the pitch changes from “we need a billion gallons of your water” to “we run a closed-loop system that uses less water than a single-family home.”

The Leak Problem: Pressurized Water Meets Million-Dollar Hardware

Here’s the part that should keep every GC and facility owner up at night: you’re now running pressurized fluid loops through a building filled with equipment worth $50,000–$100,000+ per GPU. A Vera Rubin NVL72 rack holds 72 GPUs. Do the math on what a single leaked coupling can destroy.

The cooling loops typically run water/propylene glycol mixtures — conductive enough to short-circuit electronics, corrosive enough to damage circuit boards over time. Ponemon Institute research shows roughly 35% of unplanned data center outages involve some form of cooling system failure or water incursion. Downtime costs $5,000–$10,000 per minute, and a major equipment loss from a cooling loop failure can run into the tens of millions.

Google’s Paris data center experienced a cooling pipe leak in a shared colocation facility that triggered a fire and forced extended outages lasting weeks. That was in a conventional facility. In a liquid-cooled AI facility where piping runs to every rack position, the attack surface for leaks is dramatically larger.

What this means for construction quality and commissioning:

Every coupling, manifold connection, and CDU fitting is a potential failure point. Leak detection rope sensors must run along every fluid path. Drip trays and secondary containment must sit under every rack and distribution point. Automated shutoff valves linked to the building management system must be able to isolate a leak within 60 seconds — FM Global’s FM7745 standard calls for 30-second response. A delayed response of just 5 minutes can release 100 liters of coolant.

Pressure testing must be documented at every segment before the system goes live. Flow balancing — ensuring each rack gets the right volume of coolant at the right temperature and pressure — requires precision approaching pharmaceutical manufacturing.

For GCs, this changes your risk profile fundamentally. In office and multifamily construction, a plumbing leak damages drywall and carpet. In a liquid-cooled data center, a cooling loop leak damages GPU hardware worth more per square foot than the building itself. Your warranty exposure is no longer about property damage — it’s about equipment replacement and lost revenue. Standard GC insurance policies may not cover this. Specialized risk assessment is required, and subcontractor insurance limits need to match the equipment value at risk (typically $2M+ aggregate GL per occurrence at minimum).

The installation quality standard for cooling piping in a Vera Rubin-era facility needs to be closer to what you’d expect on a pharmaceutical cleanroom or a semiconductor process line than a commercial plumbing job.

The Trade Shift: From HVAC Techs to Pipefitters

We covered the electrician shortage in the previous post — 439,000 construction workers short nationally, 300,000+ new electricians needed this decade, 30%+ wage premiums. All still true, and getting worse: Vera Rubin’s move to medium-voltage internal distribution (13.8kV or 34.5kV inside the building) and NVIDIA’s reference 800 VDC power architecture requires industrial-grade electricians who understand 15kV switchgear and DC power systems. Most commercial electricians have never worked above 480V. Switchgear demand has ballooned 35–274% since 2019.

But the mechanical trade shift is the story most people miss — and it’s the one that matters most for transitioning GCs.

In a conventional data center, your mechanical subcontractor runs CRAC and CRAH units — computer room air conditioning. That’s HVAC work. Your office and multifamily HVAC subs can do this, or learn it quickly.

In a liquid-cooled facility, your mechanical sub is running chilled water loops, CDUs, manifolds, fluid couplings, pressure vessels, and leak detection systems. That’s pipefitter and plumber work. It requires deep familiarity with fluid dynamics, heat transfer, pressure testing, and welded piping systems. It’s the same skill set you’d find on an LNG terminal or a semiconductor fab — not in a commercial building.

And the pipefitter shortage is even worse than the electrician shortage. The U.S. is projected to be short 550,000 plumbers by 2027. The average plumber is over 40. The industry needs roughly 43,000 new plumbers, pipefitters, and steamfitters per year — and those workers are being pulled simultaneously toward LNG construction (57 MTPA coming online in 2026, the fastest capacity growth in LNG history), semiconductor fabs (97 new high-volume fabs planned globally), and now data center liquid cooling.

A single data center project in Wyoming reported needing 1,000+ plumbers and pipefitters for one campus.

The union jurisdictional questions are unresolved: is liquid cooling CDU installation HVAC scope, pipefitting scope, or plumbing scope? The United Association represents ~395,000 workers across all three categories, but local jurisdictions vary, and the work doesn’t fit neatly into any traditional classification. If you’re a GC staffing a liquid-cooled DC project in a new market, expect to navigate these jurisdictional questions on every job.

Commissioning: Orders of Magnitude Harder

In an air-cooled facility, commissioning is primarily electrical — breakers, transfer switches, UPS failover, generator starts. Rigorous, but well-understood.

In a liquid-cooled facility, you’re commissioning a pressurized fluid distribution system that touches every rack. Direct-to-chip cooling may require recommissioning for every new hardware installation, because adding or replacing a rack changes the fluid dynamics of the entire loop. Industry practice currently allows only “partial commissioning” — simulating per-rack impact is, by admission, “very difficult.”

The certification landscape is just emerging. ByteBridge launched the industry’s first Foundational Liquid Cooling Certification (FLCC) in 2025. ASHRAE Technical Committee 9.9 released its first liquid cooling resilience bulletin in September 2024. The number of qualified commissioning agents is not proportional to the number of facilities under construction.

For transitioning GCs: invest in commissioning expertise before you bid. The liquid cooling commissioning skill set barely exists at scale. Firms that develop in-house capability for pressurized fluid systems will have an enormous competitive advantage.

Backup Power: From Diesel Generators to On-Site Generation

Power infrastructure for Vera Rubin-era facilities isn’t just bigger — it’s architecturally different. The traditional model of “utility feed plus diesel backup” is being replaced by something closer to an independent power plant.

Utility-scale feeds are now the baseline. Large AI facilities require 138kV, 230kV, or even 345kV transmission connections — the same voltage classes that serve municipalities and heavy industrial plants. Customer-owned substations are becoming standard. If your experience is pulling 13.8kV service to an office campus, the jump to 138kV transmission interconnection is a different world of utility negotiation, right-of-way, and lead time. Grid interconnection queues alone can run 3–5 years in constrained markets.

On-site generation is shifting from backup to primary power. With grid capacity increasingly constrained, operators are deploying gas turbines and fuel cells as the primary power source, not just emergency backup. Gas turbines deliver roughly 50 MW per acre. Fuel cells — led by firms like Bloom Energy — deliver up to 100 MW per acre, run 10–30% more efficiently than gas turbines, and can be deployed in under a year. Industry projections suggest 30% of data center sites will use on-site power as their primary source by 2030, requiring an estimated 8–20 GW of fuel cell capacity alone (with total behind-the-meter generation reaching 25–50 GW across all technologies).

Battery Energy Storage Systems (BESS) are replacing diesel generators. In June 2025, FlexGen and Rosendin launched the BESSUPS — the first utility-scale battery system designed as a full UPS replacement for data centers. Unlike traditional UPS systems that provide seconds-to-minutes of bridge power, BESS installations deliver 4–8 hours of extended backup. They’re also bidirectional, acting as virtual power plants during peak grid demand. Microsoft deployed Saft battery systems at its Stackbo, Sweden facility — four 4 MWh units providing both backup and grid services.

What this means for GCs: The power scope on a Vera Rubin-era facility now includes substation construction, medium-voltage switchgear rooms inside the building, fuel cell pads or turbine enclosures, and battery storage yards — all in addition to the traditional electrical distribution. Equipment lead times are brutal: 12–18 months for generators, transformers, and switchgear. The firms that lock in procurement commitments early in preconstruction will deliver on schedule. Everyone else will be waiting.

Who’s Building This? The Specialty Sub Landscape

The firms best positioned for the liquid cooling transition aren’t always the ones you’d expect.

Comfort Systems USA has emerged as the clearest bellwether. Year-end 2025 backlog: $11.94 billion — doubled from $5.99 billion in twelve months. Technology segment (dominated by data centers): 45% of revenue, up from 33% in 2024. They’re explicitly positioning as “long-term service and maintenance providers for complex liquid-cooling systems” with 3 million square feet of modular fabrication capacity, expanding to 4 million by end of 2026.

Southland Industries (#3 on ENR’s Top 50 Mechanical Firms) has built proprietary energy models specifically for data center power density and heat generation — one of the few mechanical contractors with genuine design-build capability here.

The major electrical subs — Rosendin, Cupertino Electric (now Quanta Services), MYR Group — remain strong on the electrical scope (still 40–45% of total cost) but haven’t publicly demonstrated liquid cooling specialty.

New entrants from industrial process piping are appearing. APEX Piping is positioning as a data center liquid cooling specialist. But the major industrial firms that build LNG terminals and chemical plants haven’t visibly entered the DC market yet — a gap that represents both a risk and an opportunity for GCs who can bring those relationships into the data center ecosystem.

What This Means for Your Next Bid

Design for 200+ kW per rack minimum. If you’re breaking ground on a facility designed for 40–80 kW per rack, you’re building something that may be functionally obsolete before it’s commissioned. Specify structural, power, and cooling capacity for at least 200 kW, with growth toward 400–600 kW.

Specify dry coolers, not cooling towers. The 45°C inlet water spec makes evaporative cooling optional in most climates. Dry coolers eliminate water consumption, community opposition, legionella risk, and water treatment complexity.

Budget for $20–30M+ per MW. The all-in cost for Vera Rubin-era AI facilities — liquid cooling ($3–4M per MW), medium-voltage power distribution, structural upgrades, and the labor premium — is projected to run well above the conventional $10–12M benchmark. Some greenfield AI campuses are estimated at $35–60M per MW when all infrastructure is included.

Recruit pipefitters, not just electricians. Your next mechanical subcontractor needs to look more like an industrial process piping contractor than an HVAC company. Build relationships now with pipefitters’ unions and firms that have pharmaceutical, semiconductor, or LNG piping experience.

Get your people certified now. Send your best to ByteBridge FLCC, ASHRAE TC 9.9 training, and Uptime Institute programs. Having certified liquid cooling commissioning staff on your prequalification submittal is a differentiator that no amount of marketing can replicate.

Plan for annual GPU refresh cycles. NVIDIA’s roadmap: Blackwell (2024), Vera Rubin (2026), Vera Rubin Ultra (2027), Feynman (2028). Each generation increases power density. Your facility infrastructure needs to accommodate at least two generations of upgrades without major construction.

The Bottom Line

The data center market is splitting in two. Conventional colocation will continue — but the growth, the margin, and the $600 billion in hyperscaler CapEx is flowing toward AI-optimized facilities that look nothing like the data centers built over the past two decades.

For GCs coming from office and multifamily: the pivot to data centers we described in the last post is real, but the target is moving. The skills that get you into conventional DC work — shell construction, site work, basic MEP coordination — are the entry ramp, not the destination. The destination is liquid-cooled, 200+ kW-per-rack, 350 psf, pressurized-piping, medium-voltage facilities that require industrial structural engineering, pharmaceutical-grade piping installation, and commissioning expertise that barely exists yet.

For GCs already building conventional data centers: don’t assume your current capabilities translate. The mechanical trade shifts from HVAC to pipefitting. The electrical scope moves from 480V to medium voltage and 800 VDC. The structural loads double or triple. The commissioning process adds an entire pressurized fluid system. And the liability exposure of a cooling loop leak in a room full of $100,000 GPUs is a different category of risk than anything in conventional construction.

The revenue opportunity is staggering — $88 billion in U.S. data center construction spending in the pipeline, 73% of new AI facilities deploying liquid cooling. The firms that invest now in liquid cooling capability, industrial electrical skills, pipefitter relationships, and commissioning expertise will capture the next wave. Everyone else will be competing for the conventional work that’s rapidly becoming the smaller half of the market.

This is part of an ongoing series on Joshua8.AI exploring where traditional real estate and digital infrastructure collide. Previous posts: Power Is the New Land and The Builder’s Pivot.